Apache Airflow: Powerful Data Pipelines as Code, but Dominated by Astronomer’s Contributions

Trickle-down economics, a concept that didn’t quite deliver the promised benefits in the U.S. under President Ronald Reagan, finds an unexpected parallel in the world of open source software. Here, the notion of elite developers producing high-quality code that eventually benefits the broader community seems to be working quite effectively.

This isn’t about economic policies, of course, but about the impact of top-tier engineering teams creating software that powers widespread, mainstream applications. Take Lyft’s Envoy project, which has become a cornerstone in service proxy technologies, or Google’s Kubernetes, a powerful orchestration system designed to outmaneuver competitors like AWS. Airbnb’s contribution to the field is no less significant with Apache Airflow, a tool that revolutionized data pipelines by providing a code-centric approach to scheduling and managing workflows.

Today, Airflow is a vital tool for a diverse array of large enterprises, including Walmart, Adobe, and Marriott. Its community is bolstered by contributions from companies like Snowflake and Cloudera, but a substantial portion of development and maintenance is handled by Astronomer. This company employs 16 of the top 25 contributors to Airflow and offers a managed service called Astro. While Astronomer’s stewardship is crucial, other cloud providers have also rolled out their own Airflow services, often without contributing code back to the open-source project. This raises concerns about the sustainability and balance of contributions within the open-source ecosystem.

The adage that “code doesn’t write itself” underscores a fundamental issue: maintaining and evolving open-source projects requires financial and intellectual investment. Without a steady flow of resources, even the most impactful projects can struggle to thrive.

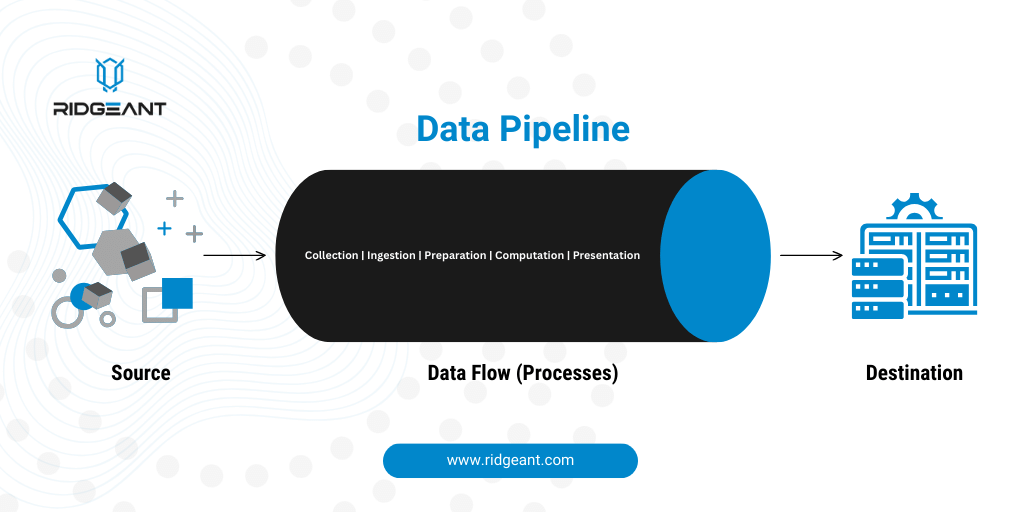

So, what exactly is a data pipeline? While today’s buzzwords might include large language models (LLMs) and generative AI (genAI), the core challenge of managing data remains constant. Companies need effective ways to transfer and process data across different systems. Airflow addresses this need by acting as a sophisticated scheduler and orchestrator for data workflows.

Essentially, Airflow serves as a robust upgrade to traditional cron job schedulers. It allows companies to integrate disparate systems and manage the flow of data between them. As data ecosystems grow more complex, so too do the systems designed to manage them. Airflow simplifies this complexity by providing a unified framework for planning and orchestrating data processes. Written in Python, it aligns well with the data-centric nature of modern development, making it a critical tool for enterprise data management.

The challenge of funding and sustaining such critical tools is a pressing one. As Airflow becomes increasingly integral to enterprise data pipelines, the question of how to support and maintain it in the long term remains crucial.