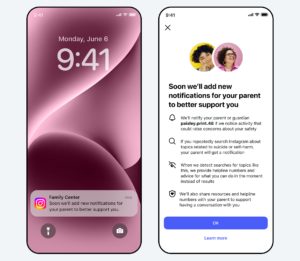

Instagram is introducing a new safety feature designed to notify parents if their teenage children repeatedly search for content related to suicide or self-harm within a short period of time.

Announced via Meta’s newsroom, the feature applies to families who use Instagram’s parental monitoring tools for teen accounts. When a pattern of sensitive search activity is detected, parents will receive a “teen safety alert” through the app, email, text message, or even WhatsApp.

In addition to the alert itself, parents will be provided with guidance from mental health experts on how to approach potentially difficult conversations in a supportive and constructive way. The goal, Meta says, is to encourage early intervention and open communication rather than punitive responses.

The self-harm risk alerts will initially roll out in the United States, the United Kingdom, Australia, and Canada, with plans to expand to additional regions later this year.

Meta also indicated that similar AI-powered parental alerts for other types of concerning activity are in development and expected to launch in the coming months. The company has increasingly focused on teen safety features amid ongoing scrutiny over social media’s impact on young users.